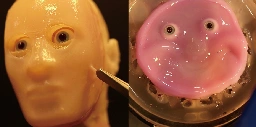

This smiling robot face made of living skin is absolute nightmare fuel

ylai @ ylai @lemmy.ml Posts 480Comments 46Joined 2 yr. ago

Dr Disrespect Admits To 'Inappropriate' Messages With Minor: 'I'm No Fucking Predator Or Pedophile'

Nearly 6 months later, Palworld devs confirm Nintendo never drew so much as an inch of its legal sword over bootleg Pokémon allegations

Dr Disrespect fired by the game studio he co-founded: 'It is our duty to act with dignity on behalf of all individuals involved'

Xbox executives just cannot give a straight answer to questions about Tango Gameworks

How Embracer's cuts killed a potential Red Faction sequel and gutted a promising studio

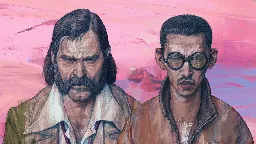

CD Projekt passes the 'Best DLC' crown to FromSoftware with Witcher-themed Elden Ring art

New 'Washington Post' chiefs can’t shake their past in London

Russia continues work on homegrown game console despite technology and scale issues

Russia continues work on homegrown game console despite technology and scale issues

In 1989, a Nintendo bigwig licensed SimCity on the spot by sliding a million dollar check across the table

A follow-up to the legendary Disco Elysium might have been ready to play within the next year—ZA/UM's devs loved it, management canceled it and laid off the team

Microsoft backs up Geoff Keighley after he ignited console warrior outrage over the Gears of War: E-Day trailer

Three side remarks about China, which can be a peculiar example to compare to for Russia, maybe even any other country: