Dad demands OpenAI delete ChatGPT’s false claim that he murdered his kids

Dad demands OpenAI delete ChatGPT’s false claim that he murdered his kids

Dad demands OpenAI delete ChatGPT’s false claim that he murdered his kids

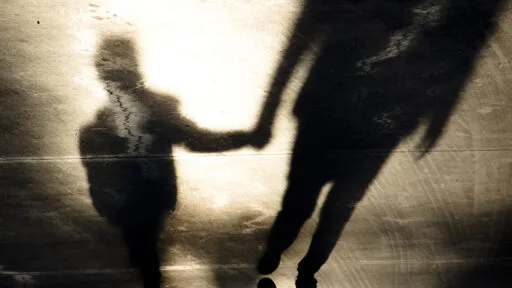

A Norwegian man said he was horrified to discover that ChatGPT outputs had falsely accused him of murdering his own children.

According to a complaint filed Thursday by European Union digital rights advocates Noyb, Arve Hjalmar Holmen decided to see what information ChatGPT might provide if a user searched his name. He was shocked when ChatGPT responded with outputs falsely claiming that he was sentenced to 21 years in prison as "a convicted criminal who murdered two of his children and attempted to murder his third son," a Noyb press release said.

It's AI. There's nothing to delete but the erroneous response. There is no database of facts to edit. It doesn't know fact from fiction, and the response is also very much skewed by the context of the query. I could easily get it to say the same about nearly any random name just by asking it about a bunch of family murders and then asking about a name it doesn't recognize. It is more likely to assume that person is in the same category as the others and if the one or more of the names have any association (real or fictional) with murder.

I don't care why. That is still libel and it is illegal for good reason. if you can't stop this for all cases then you ai is and should be illegal.

None of the moneybags will listen, unfortunately. But I'm with you. The rollout of AI was extremely irresponsible. Just to make it profitable as quickly as possible.

Seems to me libel would require AI to have credibility, which it does not.

It's a tool. Like most useful tools it can do harmful things. We know almost nothing about the provenance of this output. It could have been poisoned either accidentally or deliberately.

But above all, the problem is ignorant people believing the output of AI is truth. It's pretty good at some things, but the more esoteric the knowledge, the less reliable it is. It's best to treat AI as a storyteller. Yeah there are a lot of facts in there but when they don't serve the story they can be embellished. I don't see the harm in just acknowledging that and moving on.

Except it's not libel. It's a one time string of text generated exclusively for him. Literally no one would have known what it said if the guy didn't get the exact thing he wants "deleted" published online for everyone to see. Now it'll be linked to his name forever, but the llm didn't do that.

Libel requires the claims to be published or broadcasted, so it isn't. A predictive text algorithm strung some random words together, and the guy got offended.

It's like suing because your phone keyboard autosuggested "is a murderer" as the next words after you wrote your name. Btw, I tried it a few times for lulz and managed to get it to write out "bluGill and the kids are going to get it on", so I guess you can sue Google now?

I read it as they aren't using libel as cause for their complaint but failure to comply with GDPR

I have this gun machine that shoots in all directions randomly. I can't predict it, so I can't stop it from shooting you. So sorry. It's uncontrollable.

Yeah but I can just ignore the bullets because they are nerf. And I have my own nerf guns as well.

I mean at some point any analogy fails, but AI is nothing like a gun.

If creating text is like shooting bullets, we should require a license for text editors.

Maybe people need to learn that AI hallucinates

I'm sorry, as an American, I'm not seeing the problem. Don't you just need a second gun that shoots in random directions to stop the first gun? And then a third gun to shoot the 2nd gun? I mean come on now, this is basic 3rd grade common sense!

From the GDPR's standpoint, I wonder if it's still personal information if it is made up bullshit. The thing is, this could have weird outcomes. Like for example, by the letter of the law, OpenAI might be liable for giving the same answer to the same query again.

then again

The made up bullshit aside, this should be a quite clear indicator of an actual GDPR breach

Funny how everyone around laughs at free speech when it's for humans, but when it's a text generator, then suddenly there are some abstract principles preventing everyone to sue the living crap out of all "AI" companies, at least until they are bleeding enough to start putting disclaimers brighter than in Vegas that it's a word salad machine that doesn't think, know, claim, dispute, judge or reason.

Isn’t that a great tool to generate nonsense datasets to poison big data of trackers somehow 🤔

They can just put in a custom regex to filter out certain things. It'll be a bit performative since it does nothing to stop novel misinformation, but it would prevent it from saying what it's legally required not to say.

Well, it wouldn't really, it would say it and just hide it under a message saying it violates boundaries. It's all a bunch of performative bullshit, actually.

For example, the things it's required not to say would actually be perfectly fine in the realm of fiction or satire or a game of Simon says, but that'll be disallowed, as well, because the model can't actually tell the difference.

And it's llm owners problem to figure out how to fix

Which is why OpenAI should compensate anyone they have damaged in some way and yes that would mean it would cease to exist overnight. That‘s because a criminal organization shouldn‘t be profitable in the first place.

you can tweak the weights though

Tweaking weights is no guarantee and can easily affect complete unrelated things.

Nobody would sue over a dirty context

The fact you chose to make your data storage unreadable, doesn't relieve you of the responsibilities inherent to storing the data.

Throwing away my car key won't protect me from paying parking tickets i accrue while being physically unable to move my car.

It's not unreadable, it doesn't exist.

The responses are just statistically what sounds vaugly what you want to hear.

They can erase the chat responses, but that won't stop it from generating it again.

Generative AI doesn't start with facts and work from there. It's just statistically what you want to hear.